Two weeks ago, rather for the sake of interest, we published a detective story How we protected our customers’ websites from a critical bug in the WordPress plugin ThemeGrill Demo Importer. We didn’t expect it to generate such a response and get so many inquiries. That’s why we decided to write another example of the work of our security team.

January 27 and 27. On February 2020, the Sucuri Labs security team published a warning about backdoors masquerading as the blnmrpb and wpdefault security plugins.

What is a backdoor

If an attacker gains access to your WordPress installation through a security hole or stolen passwords, they may not do any mischief right away. In some cases, it just places a backdoor through which it can control your entire WordPress installation in the future. For example, to send spam, download data about your users, carry out attacks, mine cryptocurrencies, etc.

Placing an inconspicuous backdoor instead of an immediate attack has several advantages for the attacker. For example, that the backdoor will be uploaded to the backups of the site, and then restoring from the backup will not help, or that he can exploit the site when it is convenient for him. The backdoor works even if the security hole is fixed or the password that allowed it to get in is changed.

How we look for a backdoor

If we know about the existence of a backdoor as in this case, we can immediately proactively search the traffic to see if someone is calling these particular files. It is the fastest solution, but not entirely effective. Our protections only see HTTP traffic, so users with encrypted traffic (HTTPS) are out of luck for now. We are working on improving our protection to filter encrypted traffic.

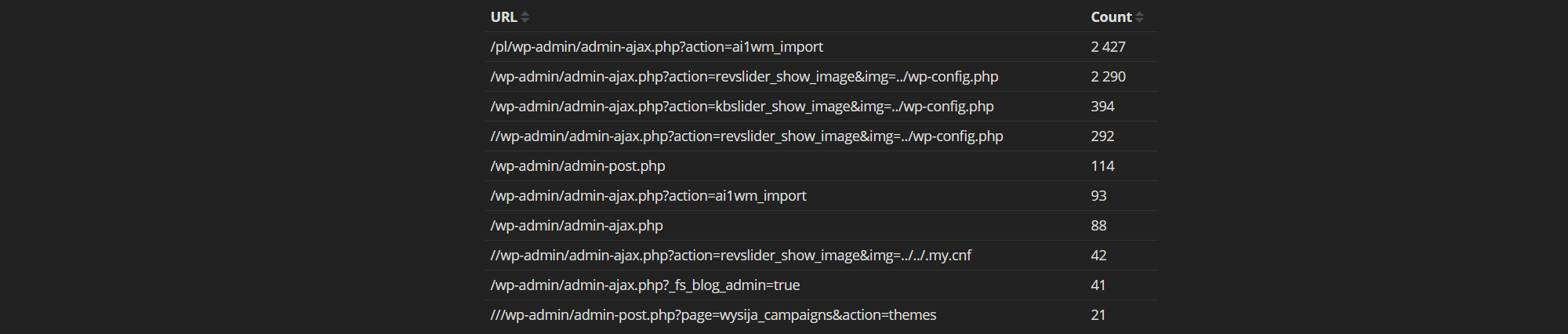

Another way we look for a backdoor is through the CML. The Central Log Monitor is our system that collects absolutely all conceivable data from all servers and sorts it in one place in real time. Nearly 700 GB of text data is collected daily. CML gives us access to access logs of all web hosts, where we can find backdoor calls.

But what if no one calls the backdoor? They just put it on the server and don’t care about it anymore. In this case, we have good old-fashioned file searching at our disposal. The problem is that we have to go through an incredibly large number of files on all the servers and do it in a way that doesn’t limit customer service.

It’s not easy. We take care of almost 105 thousand web hosts, which host approximately 137 thousand websites on second-level domains. Tens of thousands of these are content management systems, most of which use caching plugins. Large active caching sites create thousands of files that are continuously added, overwritten and deleted.

Over the years, we’ve got our methods and fine-tuned scripts for this, but it can still take days to go through everything. Depends on what we’re looking for and how well hidden.

What we do when we find suspicious files

If there are suspicious files, the technicians will notify the owner by email to check the situation. Just because a file looks infected does not necessarily mean that it is. In addition, the owner can already work on the removal and have the situation under control.

In the case of a backdoor, as in this situation, it’s a lot easier. The incident is handled by a technician from the security team who is familiar with the specific threat. We know what the threat is doing and what we can afford to do so that it ideally doesn’t affect customer service.

The security engineer will block access to the file by modifying the permissions so that it cannot do any harm. The customer is then informed by e-mail, which also contains tips on how to resolve the situation.

This approach has proven to be the most successful for us in the long run. For example, if we delete files directly, an attacker will upload them again the next day through an unpatched security hole.

Most attacks on today’s content management systems are carried out by automated scripts on a massive scale. They’re doing the same thing. If they fail to upload a backdoor through a security hole because their rights don’t allow it, or they find out there is already a backdoor, they move on.

This only applies to compromised sites that are not causing mischief yet. Of course, when a web host is actively abused, the technicians act more forcefully.

How did it go?

When you’re the most popular WordPress web host for many years, also with the most active installations, you can probably guess that we’ve found a few compromised installations. We sent the owners a notice and some tips on how to solve the problem. They can also find help on our community website help.wedos.cz.

One more interesting thing. We found installations where the backdoor was hidden in the directories of known caching plugins. This is quite clever, because sometimes these directories are neglected when browsing due to the large number of files.

Conclusion

We have DDoS protection, IPS/IDS protection, we monitor traffic in detail, keep track of current security threats and even actively look for threats on customer sites. We used to do it mainly for the convenience of our customers and to learn something new with the technology we have at our disposal. But we have reached a point where all this expensive technology has even started to pay off.

Customers are happier, the burden on customer support has dropped, technicians can focus on development, the administration associated with compromised sites (especially legal) has decreased, and the cost of operations has decreased. After all, we filter more than half of the traffic, which in most cases was directed to uncached sites and significantly loaded the servers.

Further investments in security (hardware, software, development, training) are not a problem for us. On the contrary. The only thing we’re missing is people 🙂

We have enough data to detect new attacks. They hide zero day attacks that are often not discovered until months later. If we could find them and report them to the developers, it could save a lot of sites. We just lack colleagues in the security team who would go through hundreds of gigabytes of logs day and night.