We are always honest and open with you, our customers. We never keep any issues that have affected us and may have affected your service a secret from you. Sunday’s connectivity outage is no exception. Due to the scale, we have prepared an official statement here on our blog.

On 17. 11. 2019 between 12:27 and 12:44, i.e. for about 17 minutes, there was a connectivity failure on one of the routes of our datacenter. We have dealt with the situation with the utmost priority.

Outage at our connectivity provider Kaora

At the Ce Colo (formerly Sitel) data centre, where Kaora has its backbone technology, there was a power outage on one of the supply branches. However, according to Kaora’s official statement, they also experienced a problem on the other branch of the power supply, which led to the failure of their technology. In addition, after the power supply was restored, some of their access switches were not working. They had to replace hardware elements and reconnect optical routes.

Why didn’t our backup route work?

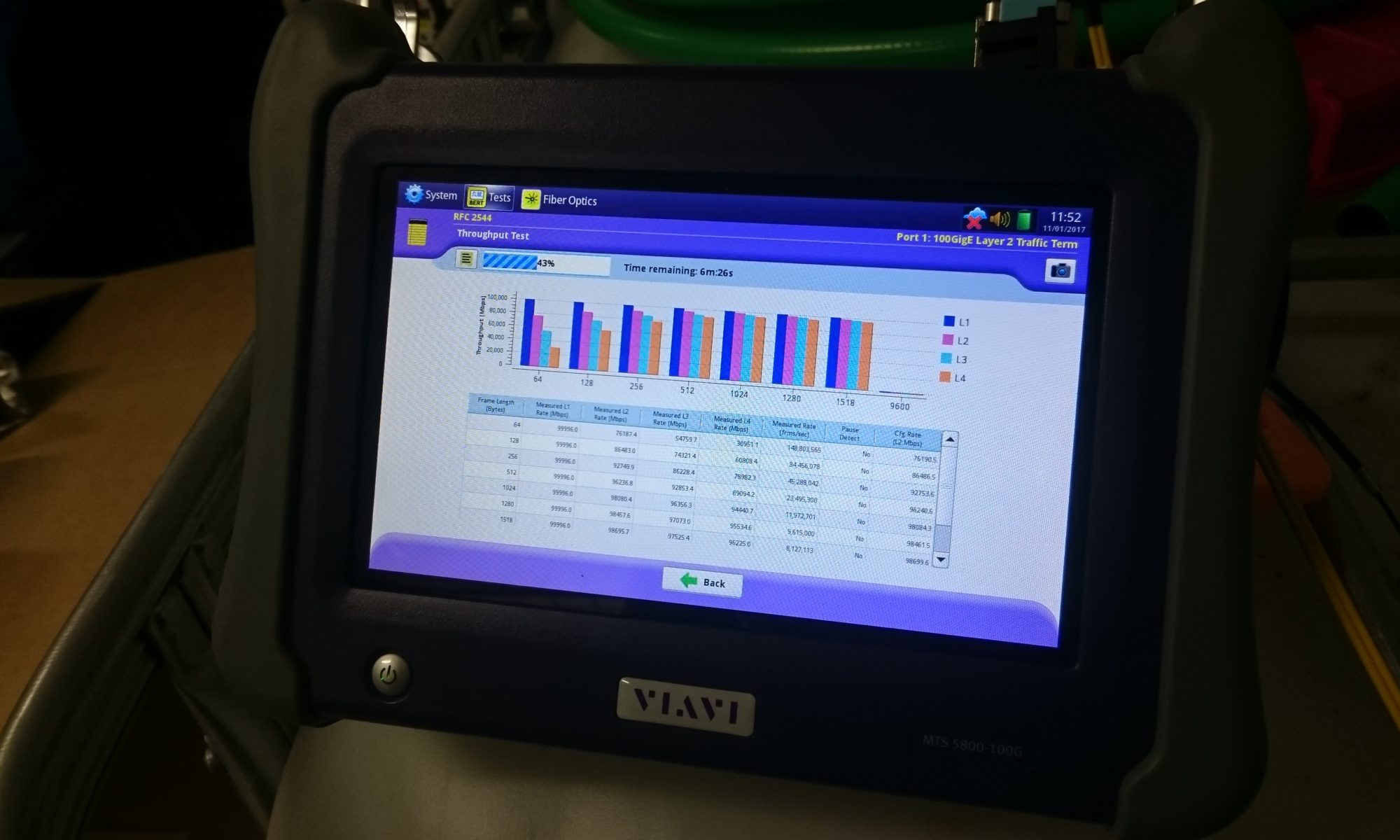

We have a total of 3 routes and pay for expensive internet connectivity with them (3x 100 Gbps connections + additional 10 Gbps connections). Each route follows a different geographic path because the greatest danger is physical disruption of the route. For example, if a digger knocks over a fibre optic cable, which happens sometimes. This year it was twice and none of our customers even noticed.

- Route 1 via Tábor to SITEL (Ce Colo) in Prague, supplier O2 (Cetin).

- Route 2 via Písek to SITEL (Ce Colo) in Prague, supplier O2 (Cetin).

- Route 3 via Havlíčkův Brod to the GTS in Prague, supplier ČD Telematika, which physically ends at the Žižkov tower of the CRa.

As you can see, routes 1 and 2 converge at the Ce Colo datacenter, which was affected by a power outage. In case something similar or even worse happens, we have a backup route 3, which leads to the GTS datacenter and is also from another supplier just in case. We have it very clearly written in our contract terms with CTD that under no circumstances may a packet pass through Ce Colo on this route.

So why didn’t this third backup route work as it should have?

The route as such worked. It even worked perfectly. The problem was again with Kaora. Without our knowledge, they have (sometimes recently – days or weeks) intervened in the network infrastructure settings (BGP routing) by not promoting the default route, which resulted in not switching the routing to the backup route. So physically the backup route worked, but the problem was that our internal routing based on OSPF protocol (it gets routes from BGP protocol) didn’t know where to send the packets, so it didn’t send them anywhere. It’s simply put…

It took us a few minutes to find the problem, find a solution and set up a different configuration, but in the meantime the power was restored in the Prague datacenter and everything was running. We were ready for the reconfiguration. This was a major manual intervention in our network infrastructure, which must be carried out with the utmost care.

Although the fault was not on our side, it must be admitted that our part is in the fact that we trusted our supplier Kaora so much in the first place. We also admit that we have been looking at this option for several months and even have a project in the works with other independent contractors and another route. Unfortunately, 100 Gbps connections are still rare in the Czech Republic and no one will set them up on the spot.

What we’re going to do to make sure it doesn’t happen again

Although this was not an error on our part – we cannot blame the power failure at the Ce Celo datacenter, Kaora’s backup power through the second branch not working properly, or the changes they made that caused the routing to not switch to our third route. Even so, we know that something must be done about it, because sooner or later a similar situation will arise again. We host almost every 5. cz domain, more and more large projects are moving to us and next year we will launch WEDOS Cloud, WMS and a few other services that will depend on almost 100% availability.

Just yesterday we modified the configuration of our backbone routers so that we are not dependent on what BGP routing configurations we get from our vendors and have no problem if they make a change without our knowledge.

In addition, our network engineers have been tasked with preparing a proposal for regular sharp tests of outages on various routes. This will be similar to the rigorous and stressful tests that our engine generators have to undergo every month. See the article Hluboká was without power for an hour, except for WEDOS.

Yes, we test generators, UPS, cooling every week and once a month we do a live test under load (simply drop the breakers and see what happens). We will now regularly do the same with the network, individual network elements and connections.

In connection with the launch of the second datacenter, the company’s management had already decided that it was necessary to build another reliable backup route. It will run through České Budějovice and we will use the optics of ČDT, which is the operator of literally the backbone network infrastructure of the state. If we succeed, we will not be dependent on Prague datacentres at all. Nor does it matter what happens in them or to them. In České Budějovice we will connect additional 100 Gbps links to other networks. This will change our independence from Prague to 100%. This incident is a reason to make the fourth route a priority. The original plan was to complete it next year. However, we decided to hurry it up and finish it by the end of this year!

Each of our datacentres will therefore have 2 independent routes to other networks and our two datacentres are connected to each other by 2 independent routes (one around Hluboká, one through the Hluboká castle). Everything is without concurrence. So each datacenter has several variants of interconnection.

Conclusion

We currently have connectivity via the three routes mentioned above and there will be a fourth route. We have 100 Gbps connectivity from Cogent, 100 Gbps from Telia, 2 x 100 Gbps from Kaora (one on Ce Colo and the other on the CRa tower) and then we have a backup connection of 10 Gbps directly to Telia and 10 Gbps to the CTD network.

We apologize to all customers for any complications. We’ve done our best to get your service going as soon as possible, and we plan to put measures in place in the future to minimize similar problems caused by third-party service.

EDIT on 21. 12. 2019

Currently, the backup connectivity is supplemented by a connection to ČD Telematika in their Prague U2 datacentre. From there, we also have a leased 100 Gbps fibre optic route to another TTC datacentre, where we now have additional backup connectivity to Kaora. We are negotiating with other providers in these locations for backup connectivity.

In January 2020, we would like to launch another backup 100 Gbps fibre route to České Budějovice and connect with other providers there. This will avoid dependence on Prague.